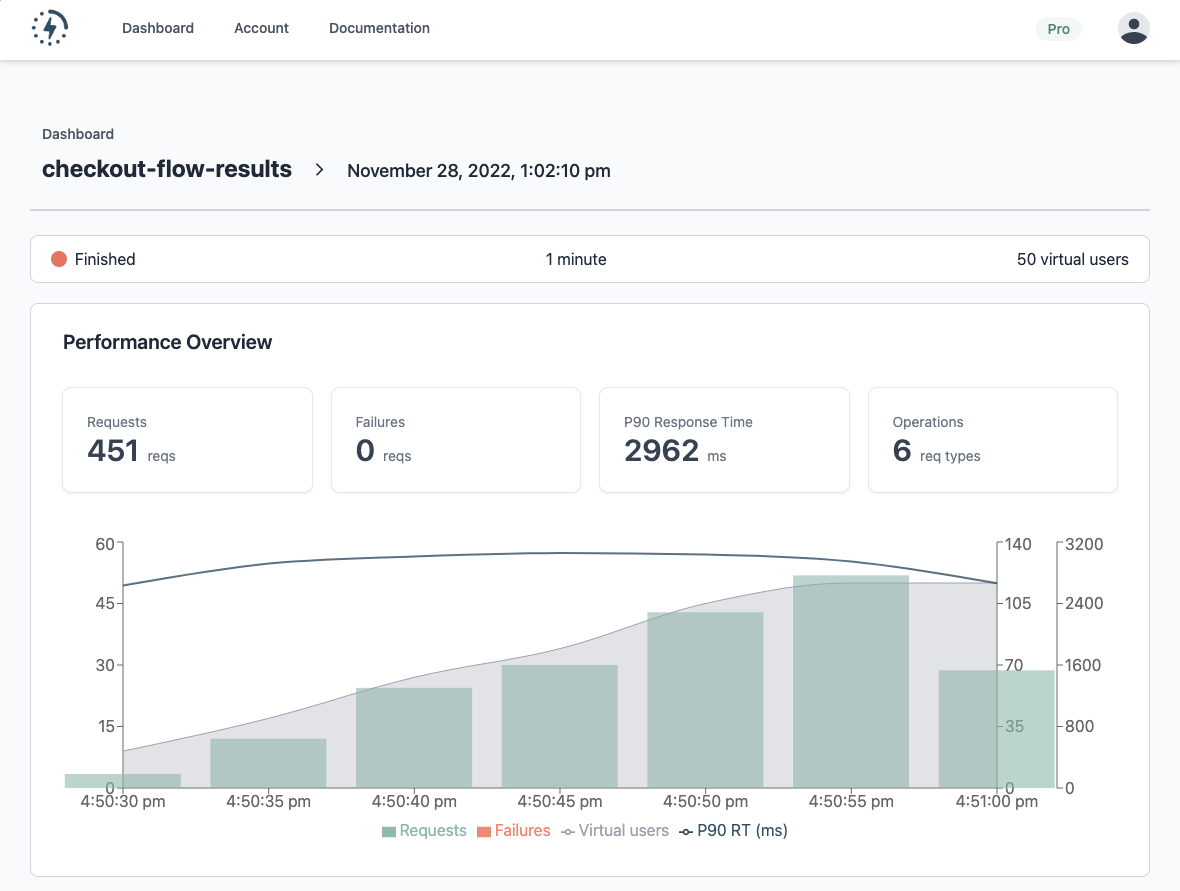

Performance test thresholds are a new feature that is now available to all Latency Lingo users! This feature helps software teams monitor the health of their performance tests and identify performance regressions.

Performance testing is an essential part of the software development process. It helps teams ensure that their applications can handle the expected workload and provide a good user experience. However, manually analyzing and interpreting performance test results can be time-consuming and error-prone.

Latency Lingo solves this problem by providing a comprehensive, easy-to-use platform for analyzing performance test results and the new thresholds feature takes this to the next level.

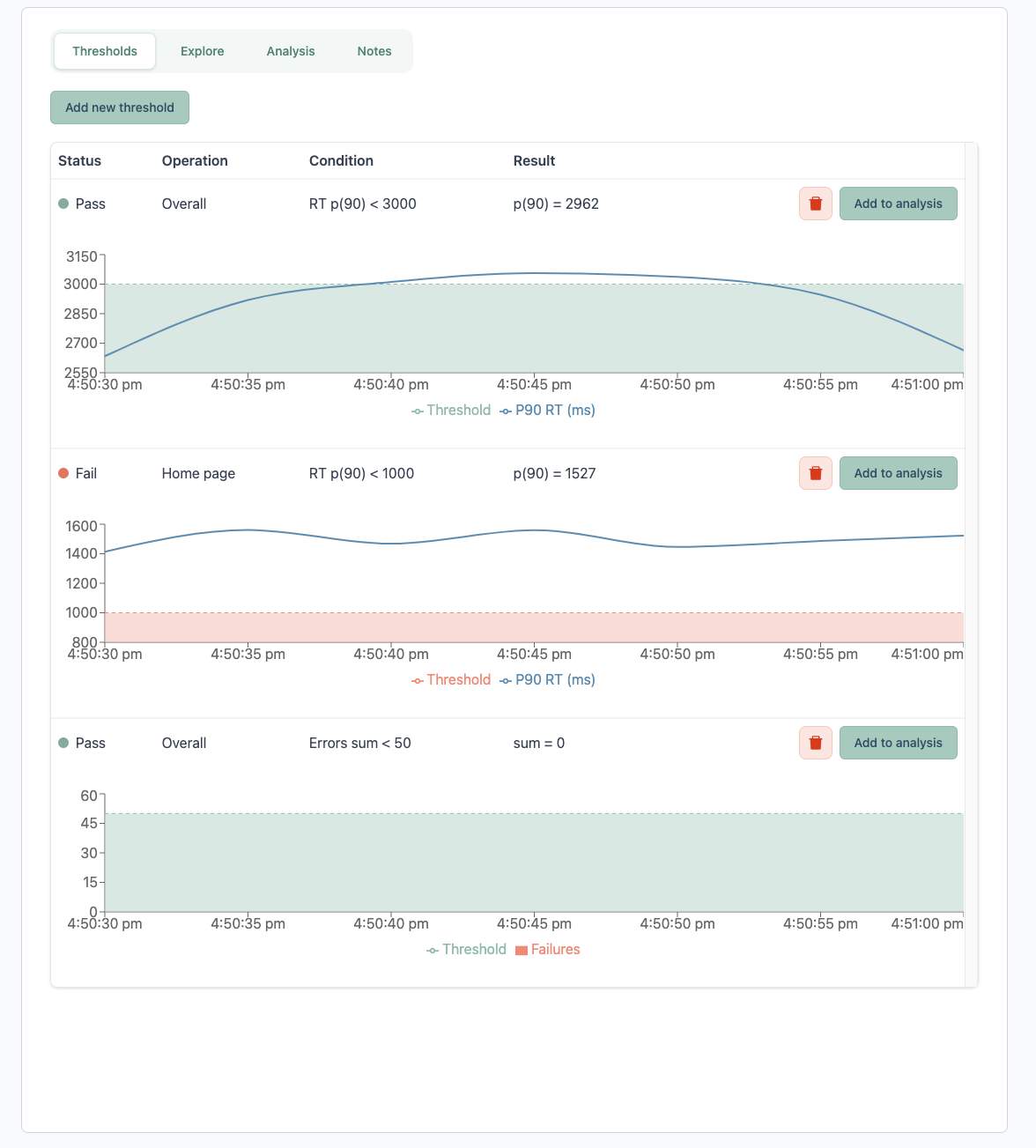

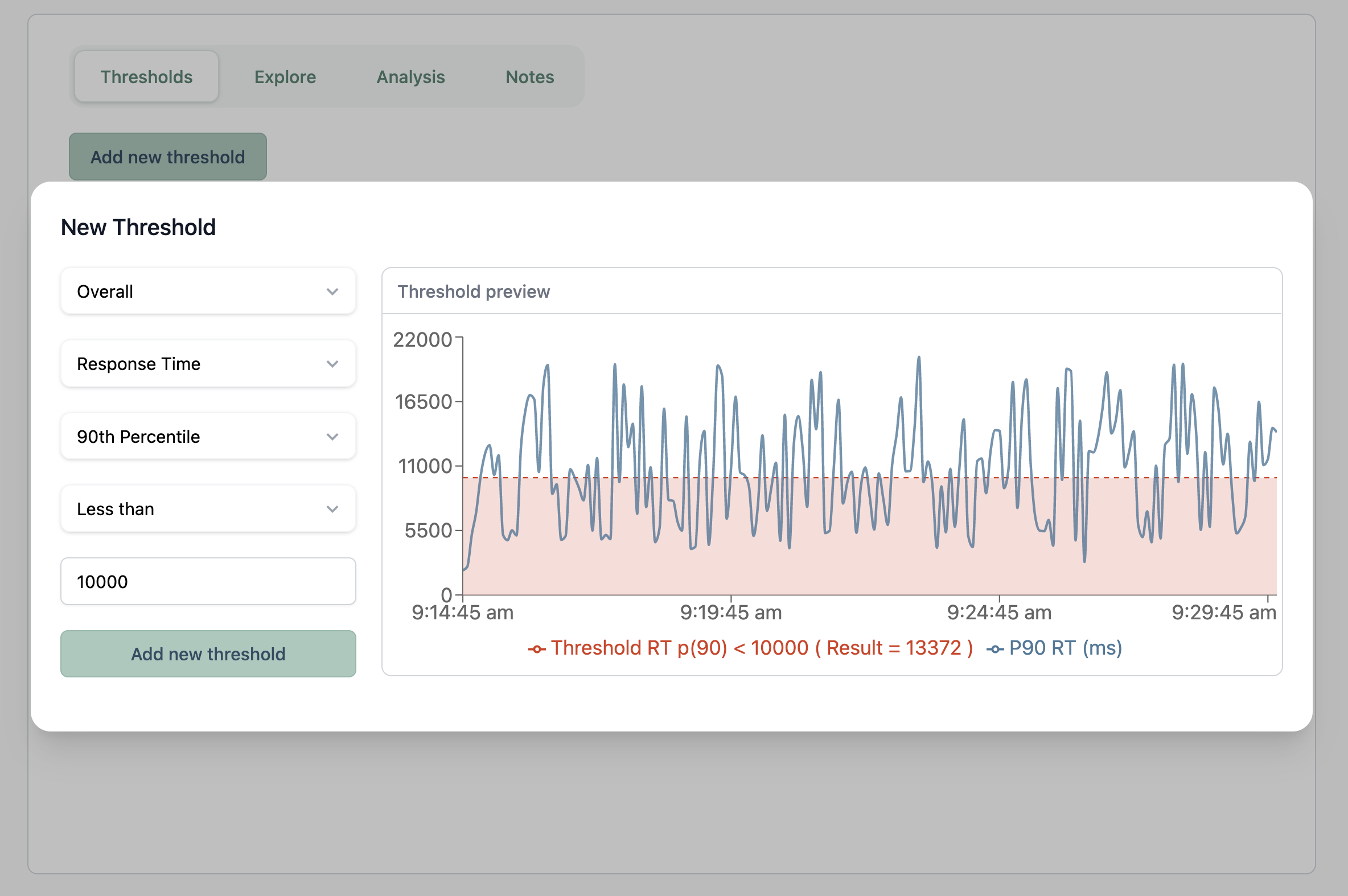

Thresholds allow teams to define pass/fail conditions for their performance tests, based on metrics such as response time, error count, and throughput. This makes it easy for teams to ensure they are meeting their performance requirements and objectives.

Since Latency Lingo can integrate with any load test tool like JMeter, K6, and Gatling, you no longer need to rely on a custom process to programmatically evaluate the test results from open-source tools. Instead, you can set up your tests to automatically send results to Latency Lingo and then use thresholds to monitor the health of your tests and notify you in case of failure.

Thresholds are also designed to be easily integrated with your CI/CD test automation pipeline like Github Actions or Jenkins. With this feature, you can use Latency Lingo to help determine your build status based on the results of your automated performance tests. This allows you to catch performance issues early in the development process before they become a problem in production.

You can visit the documentation to learn more. Sign up for free to try it out and please share any feedback!

Have any questions or want to hear more?